AI is making engineers faster. So why does everything feel slower?

AI tools speed up developers, but delivery feels slower. Here is what the data tells us about the gap between output and absorption.

Why developers feel fast while delivery feels slow

Ask any developer using Claude Code, Cursor, or GitHub Copilot how they feel about their tools, and you will hear something close to enthusiasm. They draft threat analysis documents in minutes. They identify vulnerabilities that need patching. They generate design docs, diagrams, and work plans. They knock out boilerplate that used to eat half a day. The tools are, by a fair margin, the most useful thing to happen to software engineering in a decade.

And yet. Ask the engineering leader running that same team how delivery is going, and you will hear something different. PRs are stacking up in review queues. Incidents are climbing. Senior engineers are buried. Releases feel slower, not faster, and nobody can quite explain why.

Both things are true at once. That is the problem worth understanding, because it changes what you do next about how to measure engineering productivity, how to invest in tooling, and how to make headcount decisions in a market that is pushing leaders to act before the data is in.

Drawing on two years of telemetry from 22,000 developers across 4,000 teams, the AI Engineering Report 2026 measures what happens when AI moves from assistant to author. The throughput numbers are real. So is the gap between what gets written and what the system can absorb. Let's break this down.

Developers are genuinely thrilled. They have reason to be.

Developers using AI tools are not pretending. The productivity gains they feel are real, and in many cases they are extraordinary.

A senior engineer can now draft a threat model for a new service in an afternoon instead of a week. Architectural decision records that used to require dedicated writing time get drafted alongside the work itself. Security reviews surface vulnerabilities the team would have missed. Onboarding documentation gets written, updated, and kept current. Diagrams that nobody had time to make finally get made. The surrounding work of software engineering, the documentation, the analysis, the planning, the communication, has been transformed.

And yes, AI also writes code. Quickly. Idiomatically. In ways that are often indistinguishable from what an experienced engineer would produce on a good day.

This is the part of the story that is being told accurately. AI is genuinely good at a great many things developers do. Throughput data backs up the felt experience: task completion is up 33.7% per developer, and tasks that involve code specifically are up more than six times that at the team level. Epics completed per developer are up 66.2%. Those are not noise. Those are real gains in real engineering output.

If the story stopped there, this would be a different post. The story does not stop there.

What feels productive at the desk is not the same as what is productive

Here is where the felt experience and the measured experience start to diverge, and the divergence is sharper than most leaders realize.

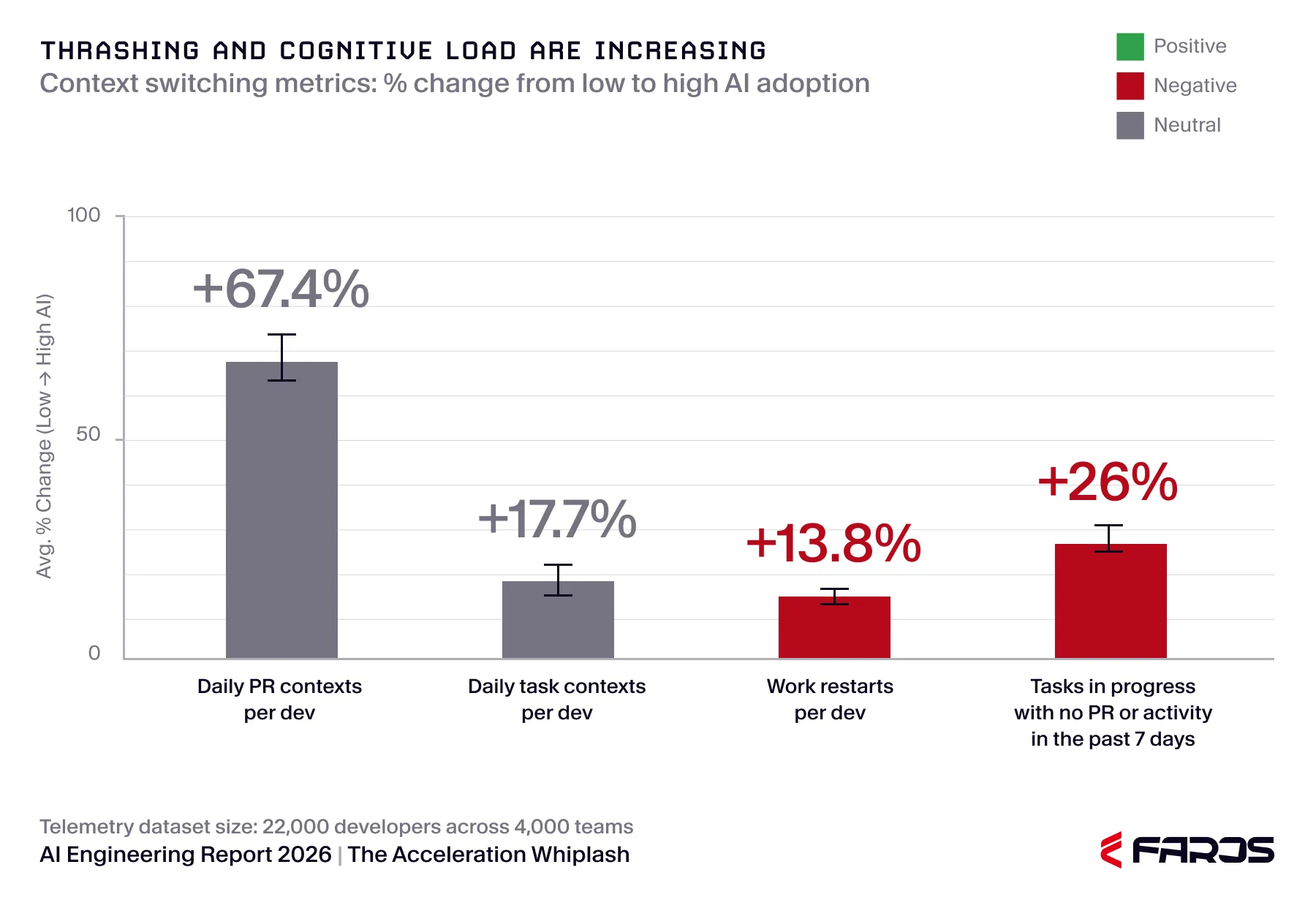

Throughput is up. That part is real. But the same telemetry shows that under high AI adoption, daily PR contexts per developer are up 67.4%. Daily task contexts are up 17.7%. Work restarts (tasks moving back to in-progress after another stage) are up 13.8%. And 26% more in-progress tasks show no PR or activity for seven or more days.

That last number is worth sitting with. It describes work that was started, claimed capacity, and then stalled. The picture is of a development environment where it is easier than ever to begin something and harder than ever to finish it.

This is the first crack in the productivity story. AI made initiation cheap. It did not make completion cheap. Developers, newly capable of opening more threads at once, do exactly that, and the cognitive load of managing all those parallel threads becomes its own tax.

None of this contradicts the genuine value developers get from AI. It does suggest that "I feel more productive" and "we are more productive" are no longer the same statement. They have come apart, and engineering productivity measurement has to account for that.

At the system level, the picture changes completely

Step back from the individual developer to the workflow that connects them, and the data gets harder to look at.

The average time a task spends in progress is up 225.2%. The average time it spends in a wait state, where it is queued and blocked rather than actively progressing, is up 81.8%. Median time to first PR review is up 156.6%. Median time in PR review is up 441.5%.

These are not productivity metrics. These are flow metrics, and they are pointing in exactly the wrong direction. Work is moving more slowly through every stage of the pipeline, even as the volume of work entering the pipeline accelerates.

The reason matters. Reviewers are not slow. They are buried. The code arriving for review is larger (PR size up 51.3%), touches more files (files per PR up 59.7%), and frequently arrives in a state that is not review-ready. AI-generated code presents a particular challenge: it is often superficially convincing. Idiomatic, well-named, stylistically consistent. The structural and logical failures, when they exist, are beneath the surface. Catching them requires careful reading and reasoning, the kind of work senior engineers are uniquely equipped to do, and it is consuming them.

Meanwhile, 31.3% more PRs are merging without any review at all. The gates are not just slow. In a meaningful share of cases, they are open.

DORA's 2025 report reached a different conclusion: that strong engineering foundations protect organizations from AI's downsides. The Faros telemetry does not support that as a protective factor. High-performing organizations are seeing the same downstream deterioration as everyone else. The discrepancy is informative. DORA's findings come from surveys, which capture how developers feel. Telemetry captures what the systems are actually doing. Right now, those two views are telling different stories, and the survey lag is real.

Engineering systems were not built to absorb what AI is producing

Pre-AI engineering processes assumed two things: a roughly bounded volume of code per developer per week, and a roughly consistent quality baseline for that code. Review queues, QA cycles, deployment cadences, incident response capacity — all of it was sized for those assumptions.

AI broke both assumptions at once. Volume exploded. With 60% of AI-generated code now being accepted into codebases (up from 20% a year ago) and acceptance rates that high mean AI is no longer assisting authorship, it is doing it. Quality became inconsistent in a way that surface-level review does not catch reliably.

The result is a system designed for human-paced output trying to absorb machine-paced output, and the strain shows up at every downstream stage. This is not a problem you fix by hiring more reviewers, expanding QA, or investing in faster incident response. Those are symptoms. The strain is structural.

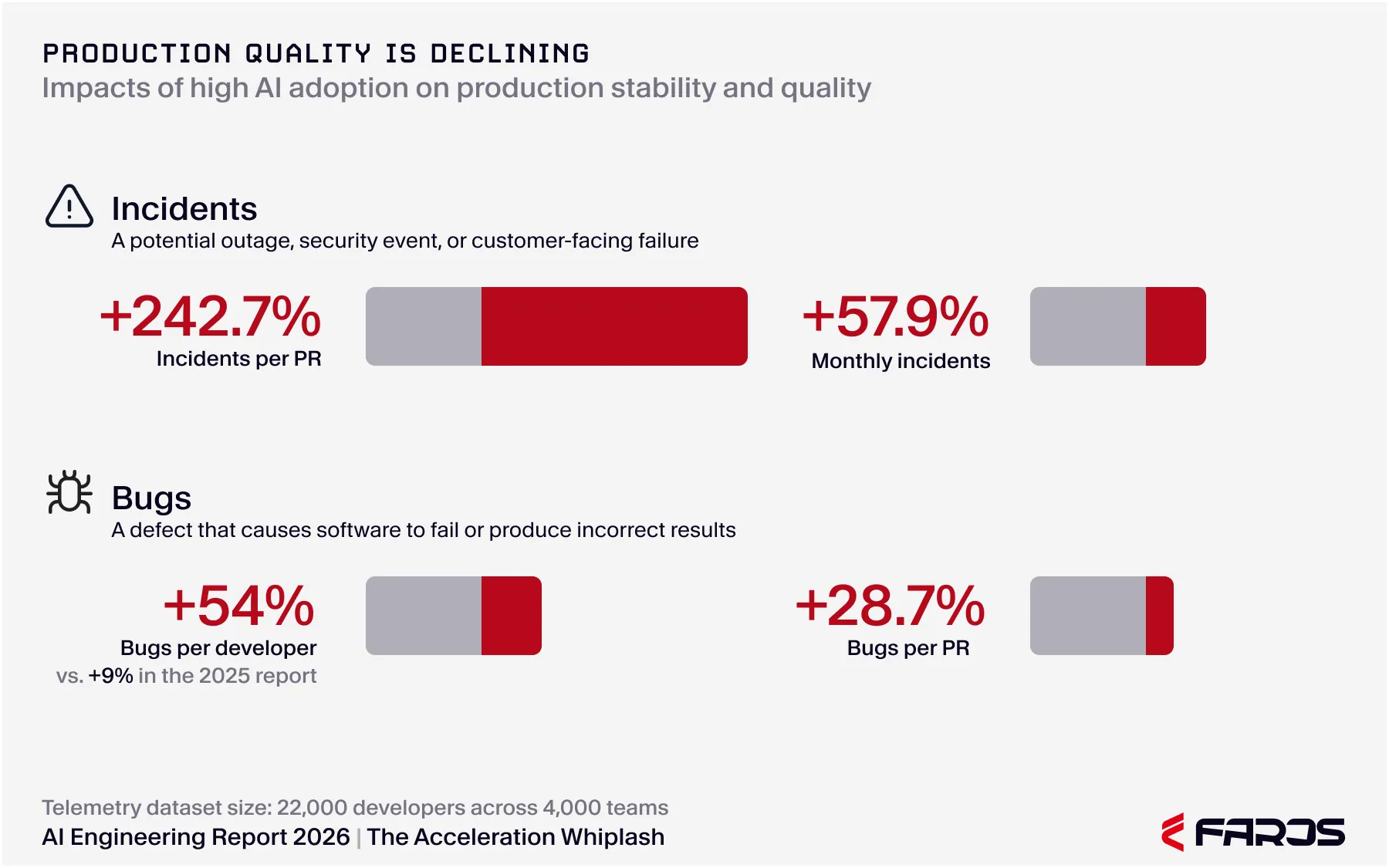

This is what we mean by the AI Acceleration Whiplash: AI is producing code faster than ever, and the quality of what reaches production is degrading at the same time. Bugs per developer up 54%. Incidents per PR more than tripled. 31.3% more PRs merging without any review. The acceleration is real, and so is the cost it is loading onto the systems that customers actually depend on. The flow slowdowns at review and QA are the visible symptom of the same underlying problem: code arriving in a state the engineering system was never built to absorb safely.

How do you measure developer productivity when AI changes the equation?

The short answer: stop measuring activity, start measuring flow and outcomes.

Activity metrics (lines of code, commits, PRs opened) are the metrics AI inflates most easily, which is precisely why they have become the least useful indicators of actual engineering productivity. A developer can open ten PRs in a morning with AI assistance. Whether any of those PRs improve the product, ship to production, or hold up under load is a separate question, and it is the only question that matters.

The measurements that hold up in the AI era are the ones that capture what survives the full pipeline. Lead time from commit to production. Incident-to-PR ratio. Bug rate per developer. Reopened ticket rate. Code churn at monthly intervals. These are software metrics that cannot be gamed by generation speed, because they only count work that actually made it through.

This is why developer productivity measurement tools that rely primarily on developer surveys are struggling right now. Surveys capture perception, and perception lags reality. By the time a developer's "I feel productive" sentiment shifts to reflect quality problems, the incidents have been accumulating in production for months. Telemetry-based measurement, drawn directly from the systems where the work happens, does not have that lag. For engineering leaders making consequential decisions about headcount, tooling, and process, that timing difference is the difference between acting on signal and acting on stale data.

The gap between output and absorption is widening, not stabilizing

The most consequential finding in the report, for any leader trying to forecast where this is headed, is that the gap is not closing. As AI adoption deepens within an organization, the downstream metrics get worse, not better. There is no point in the data where the system catches up.

That is the part that should change how engineering leaders are thinking about this. The intuition that says "we are still in the early innings, the tools will get better, our processes will adapt" is being tested by the telemetry, and the telemetry is not cooperating. Stronger pre-AI engineering practices do not insulate organizations from the effect. Maturity is not a moat. The AI acceleration whiplash arrives at high-DORA organizations and low-DORA organizations alike.

The implication is that you cannot wait this out. Whatever process changes are required to absorb AI output safely, they have to start happening now, before agentic authoring (currently less than 1% of PRs) scales further and puts another order of magnitude of pressure on the same downstream systems.

Why this matters more than productivity gains

The temptation is to treat this as a productivity story. More code, less code. Faster, slower. That framing misses the stakes.

What the data actually describes is a stability and risk story. Bugs per developer are up 54%. Incidents per PR have more than tripled. Monthly incidents are up 57.9%. AI-generated code is now running in production systems across finance, healthcare, and infrastructure. The gap between what gets shipped and what holds up under real load is where the business risk lives.

Engineering leaders making decisions right now about headcount reductions, AI investment scale, and team structure are making those decisions against a backdrop where the quality signal is deteriorating faster than most internal dashboards are picking up. The right response is not to slow AI adoption. It is to invest in the visibility and the AI transformation monitoring that show what AI is actually doing to your engineering system, so the decisions you make are based on what the systems are telling you, not what people feel.

What comes next

The metrics say developers are faster. The reality says delivery is slowing. Both of these statements are accurate, and both are pointing at the same underlying phenomenon.

In future posts, we will look at the side of this story most leaders have not fully reckoned with: throughput is up, but quality is down, and the tradeoff is not getting better as adoption scales. The numbers behind that tradeoff are sharper than the productivity numbers, and they are the ones that should be driving decisions about how AI gets used inside your engineering organization.

If you want the full picture now, read the Acceleration Whiplash report. It draws on two years of telemetry from 22,000 developers and lays out, stage by stage, where the system is bending and what engineering leaders can do about it.

{{whiplash}}

Read the report to uncover what’s holding teams back—and how to fix it fast.

What to measure and why it matters.

And the 5 critical practices that turn data into impact.

- Engineering throughput is up

- Bugs, incidents, and rework are rising faster

- Two years of data from 22,000 developers across 4,000 teams

Developer sentiment surveys made sense before AI. Now they're misleading you.

Developer sentiment surveys track how engineers feel. But when AI coding tools cost real money and your CFO wants ROI, feelings aren't the answer. Here's what is.