AI coding ROI that services the CFO conversation

AI coding tool prices are climbing. Learn how to build a defensible ROI calculation at the tool and team level — and justify spend to your execs.

What to measure when your AI bill grows with usage

Something is shifting in AI coding pricing, and engineering budgets are about to feel it.

Cursor moved to credit-based billing and then tightened the credits. GitHub Copilot introduced premium request surcharges. Windsurf retired its credit system in favor of daily quotas. Anthropic and OpenAI rolled tiered consumption pricing across their enterprise plans. Gartner is now predicting that more than 40% of agentic AI projects will be canceled by the end of 2027, citing escalating costs, unclear business value, and inadequate risk controls. Every frontier vendor is moving in the same direction, for the same reason: reasoning models and agentic workflows draw 5 to 20 times the tokens of simple completion depending on task complexity, and the flat-seat price tags that carried the last two years were understating the real unit cost.

If you run engineering, your next renewal is not going to look like your last one.

If you plan to respond with data rather than a guess, the first challenge is that most engineering orgs don't have a view of AI coding ROI that holds up under scrutiny. Vendor dashboards report acceptance rate, active users, and the percentage of PRs touched by AI. Those numbers aren't wrong. They just aren't an answer to the question a CFO is about to ask: what are we getting for this, and what will we get when it costs three times as much?

Vendor dashboards weren't built to answer that question

They were built to sell more seats. Acceptance rate, active users, percent of PRs touched by AI: these track adoption, not value. Adoption is necessary, not sufficient. A team that accepts 80% of suggestions and ships them as defects didn't deliver value; it delivered work for someone else.

Faros's 2026 AI Engineering Report, The Acceleration Whiplash, analyzed telemetry from 22,000 developers across 4,000 teams and found that bugs per developer are up 54% under high AI adoption, the incident-to-PR ratio has more than tripled, median PR review time is up 441%, and code churn is up 861%. Throughput gains absorbed by downstream rework are not gains. The vendor dashboard cannot see this.

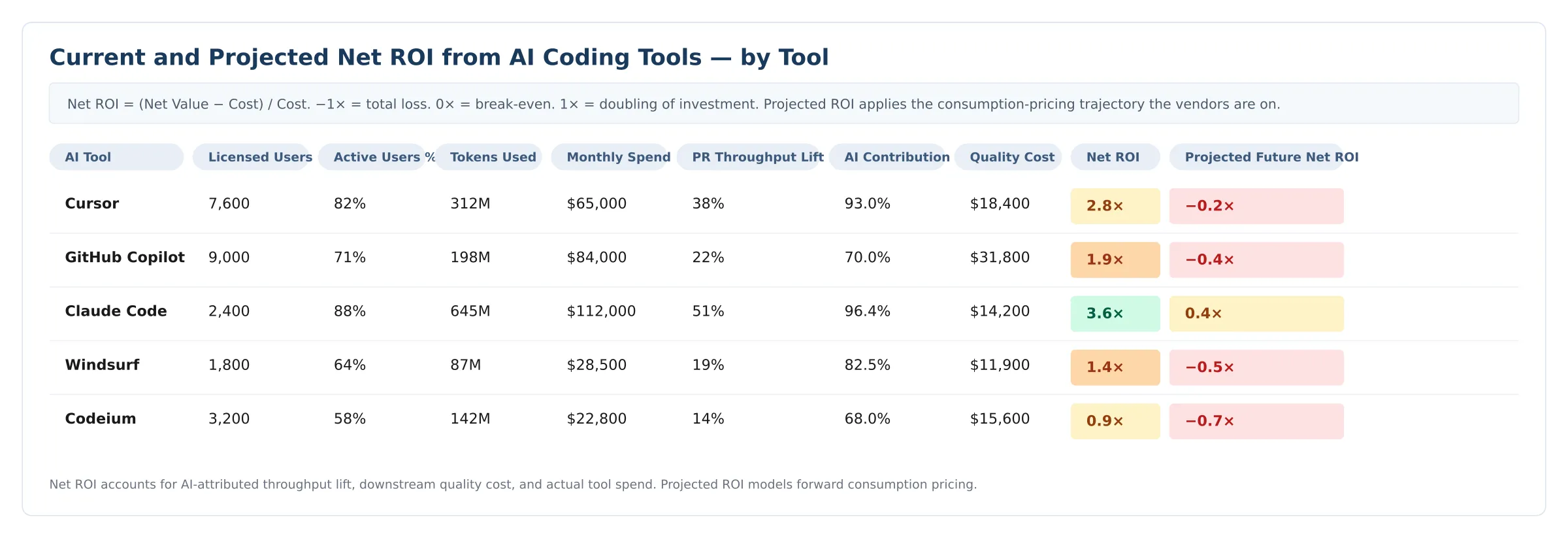

A defensible ROI number can. Here's what it looks like at the tool level.

A few tools usually earn their keep. The rest often don't.

The picture this view tends to produce isn't subtle. Most orgs running multiple assistants find a few tools clearly earning their keep, one or two that quietly aren't, and at least one whose forward-cost projection is alarming. The tool with the most usage is rarely the tool with the most net value — usage is sensitive to defaults and habit, while net value is sensitive to whether the assisted work shipped clean.

The gap between today's net ROI and the forward number is where the procurement leverage lives. A tool that breaks even today and goes deeply negative at 3× pricing is a tool to renegotiate now, not next year.

Why this number disagrees with the vendor dashboard

Three things make this calculation different from the acceptance-rate dashboard, and from the back-of-the-envelope ROI most orgs run today. It measures PR throughput lift — the productivity difference between AI-assisted and unassisted PRs from the same engineers — rather than absolute output. It applies an AI attribution factor so that improvements driven by other things (a hiring wave, an infra upgrade, easier-scoped work) don't get credited to the tool. And it nets out the quality cost, i.e., the rework, churn, and bugs generated downstream, priced at the same fully-loaded engineering rate as the throughput itself.

The number that comes out the other side is the one that survives a CFO conversation. The acceptance rate isn't.

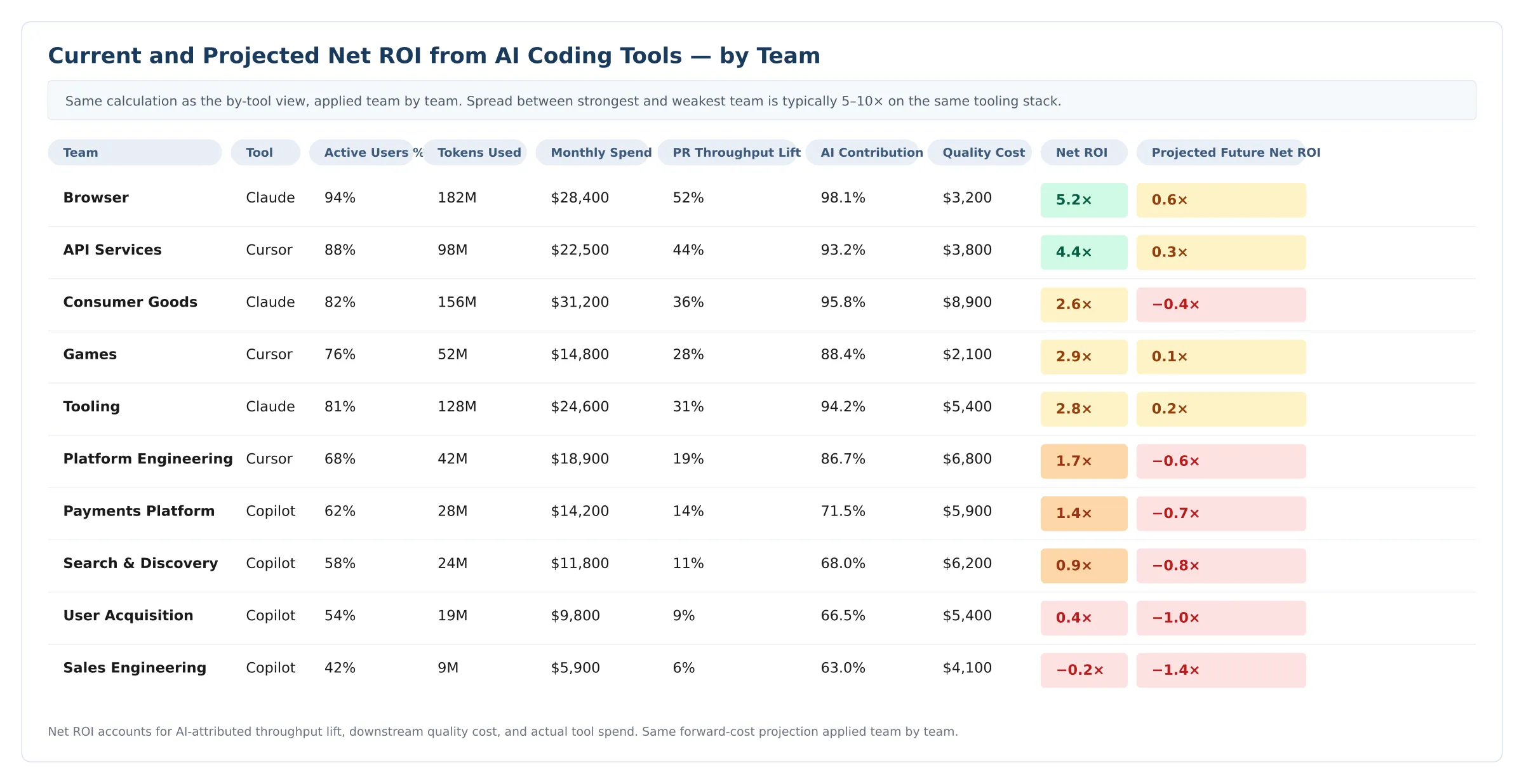

Org averages hide where the variance lives

The same calculation, applied a level down, exposes what the tool average smooths out.

Two patterns show up almost everywhere this is run.

First, the spread between the strongest and weakest team is wider than leadership expects, frequently a multiple of five to ten on the same tooling stack. The org-wide ROI is a flattering average; the team view is the actual distribution.

Second, the rankings on this view often don't match the rankings on the vendor dashboard. Vendor dashboards reward throughput. This view rewards throughput that ships clean, and the team driving the most AI-assisted output often turns out to be the team driving the most rework alongside it. The Acceleration Whiplash found 31% of PRs now merging without any review because reviewers cannot keep up with the volume AI generates. Once that cost is priced in, raw throughput stops being a reliable proxy for value.

The teams that surface as problems on this view are rarely the teams that surface as problems on the vendor dashboard. That is the entire point of running it.

Two more lenses with the same dynamic

Tool and team are the views that drive most procurement decisions today. Two more deserve a look.

Model and task. Reasoning models cost 5 to 20× completion calls. Pointed at the right work — ambiguous refactors, multi-file changes, hard debugging — they earn the premium. Pointed at boilerplate, they don't. Routing the right task to the right model is the single largest lever for managing consumption-pricing exposure without cutting tool access.

Power user, individual. Inside a single team, consumption is rarely even. One developer can pull as many tokens as the rest of the team combined. Sometimes that's an outlier with extraordinary leverage. Sometimes it's a runaway script or an unbounded agent loop. The team view can't tell the difference. The individual view can.

Why the pricing shift turns this from interesting to urgent

Under flat-seat pricing, a team or tool with high usage and mediocre economics was a nuisance. Under consumption pricing, the same team is expensive in direct proportion to how much it uses the tool, and the ROI gap widens with every renewal.

Project the same calculation forward. At 3× tool cost, the marginal teams and tools turn negative. At 5×, roughly half the program typically does. At 8× (which the vendor pricing signals suggest is 18 to 24 months out for heavy reasoning-model workloads), most of it goes underwater. The places an engineering org leans on hardest today are often the places whose economics collapse fastest under the new pricing. The time to know which ones is before the repricing, not after.

What to do this quarter

Three actions worth taking before your next renewal.

- Analyze. A four-lens ROI map (tool, team, model, individual) with rework priced in and forward cost modeled is a one-quarter exercise. Most orgs find at least one surprise in the first cut.

- Reallocate. Once the lenses are visible, the imbalances usually are too. Reallocation often costs nothing, just moving licenses, model defaults, or task routing toward where the math actually works.

- Renegotiate with data. The next conversation with GitHub, Cursor, or any frontier vendor will go better with a per-tool, per-team, per-model ROI view in hand and a forward-pricing sensitivity attached. Vendors are moving to consumption pricing because usage is their friend. Data is yours.

The orgs that figure this out early win twice

Consumption pricing is not, on its own, a threat. It is a threat only to engineering orgs that cannot see where their AI spend is actually creating value. The orgs that can see it (across tools, teams, models, and individuals) will use the repricing moment to reallocate, renegotiate, and pull ahead. The orgs that cannot see it will absorb the cost increase flat, across every team, and wonder why their AI program is getting more expensive without getting better.

Usage is not value. The gap between the two is where the real AI program decisions live. The orgs that figure out the difference this year will be the orgs with AI programs still working in 2027.

Already running Faros?

Multi-lens AI ROI views are rolling out to your instance over the coming weeks. Talk to your FDE about what's available today and what's coming next.

Not yet a Faros customer?

Let's talk. We run a first-look diagnostic against your data, and you walk out with a multi-lens ROI map you can take into your next renewal conversation. Talk to sales.

This piece builds on the findings in Faros's 2026 AI Engineering Report, The Acceleration Whiplash, which analyzed activity from 22,000 developers across 4,000 teams.

{{whiplash}}

Read the report to uncover what’s holding teams back—and how to fix it fast.

What to measure and why it matters.

And the 5 critical practices that turn data into impact.

- Engineering throughput is up

- Bugs, incidents, and rework are rising faster

- Two years of data from 22,000 developers across 4,000 teams

How to measure engineering productivity in 2026

The industry's most in-depth guide to measuring engineering productivity: what to track, how to collect data, and how to turn metrics into business impact at scale.